Modular Signal

#1: Fleshing out the modular synthesis metaphor

I was surprised at how well, and quickly, Fugue became capable of producing sound. Reviewing the code, however, it was clear the next step was to clean things up and start establishing clear, extensible patterns. As a follow-up to the first prompt, I made a request:

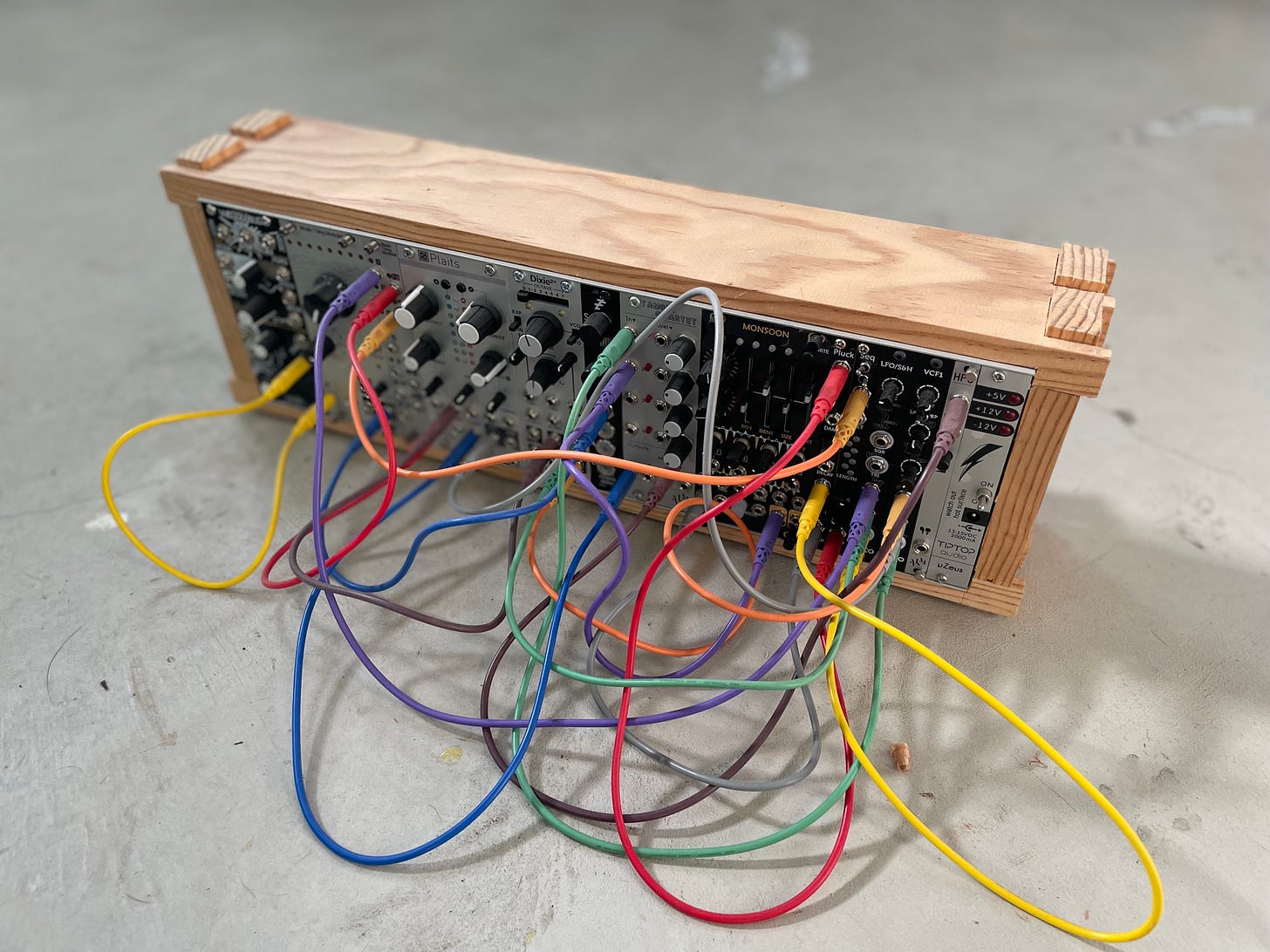

Let's start to make the components more modular. I mean modular both in terms of reusability and in terms of being more like modular synthesis. Individual objects should be largely self-contained and then connected to each other. WebAudio is a good example of this model, as is the `=>` operator in Chuck. For this library, we can use a `connect` function for this purpose. Start with the `Clock` struct. This can be considered a pure generator module that does not accept other "signals". Since we aren't dealing with pure voltage, like with modular synthesis, we may need multiple signal types. Still, I would like to keep the metaphor of chaining signals as close as possible to modular synthesis and Eurorack as possible.

After a few minutes of churning, including several minutes working through new errors, Sonnet 3.5 (via Copilot) emerged with a new set of components that it declared were more closely modeled on modular synthesis. However, the work was incomplete, and the original dorian_melody example no longer produced sound.

Please update the dorian_melody example to use the new system

To do this, the AudioEngine needed to be written. It seemed like a clear oversight on agent’s part. As directed, it chugged along with a replacement. Afterward, I noticed it left the old, now-unused engine in place.

If it is no longer used, we can remove the old audio engine

Digging a little deeper, I start seeing some issues. In its incessant drive to please me as quickly as possible, the model created some leaky abstractions. Modules needed direct knowledge of others, creating a spaghetti-like web of dependency. This was going to take several steps to unravel, but we had to begin somewhere.

Let's refactor the `ModularAudioEngine` a bit. Instead of `start_voice` function that expects a `MelodyParams` object, it should be more generic. It can have a `start` method and a `stop` method to control shared resources. Beyond that, though, it should be similar to other nodes. In a sense, this can be an output DAC node.

With that, the audio engine became just another module, which was an important step in the right direction.

But we had a problem: sonically, the dorian_example was now all wrong. It still produced a random series of tones, but the pulse, declared to be 8th notes at a bpm of 120, sound like 64th notes at around 100bpm.

It took weeks to work through this issue. Both the agent and I almost gave up on each other, and by extension this project before we could event build something meaningful. But we got there. I’ll detail how in a separate journal entry.

With beats rolling at the correct tempo, I returned to the task at hand.

I think there should be two main signal types: audio and input. The audio type represents the real-time signal and is metaphorically what passes through the cables between modules. The input type represents human input, representing knobs, buttons, switches, key presses, etc. Please update the library to be inline with this.

Confident as ever, the model declared perfect execution of the request in short order. Inspecting its work, I quickly discovered some sleight-of-hand. Instead of reducing the number of signals from eight to two, the agent reduced it to four, keeping some that it decided were too complex to reason through. Beyond this, some parameters that should have been modeled as input signals became function calls to be invoked from other modules. Since this was not some standardized interface, we were back to unnecessary complexity and leaky abstractions.

It became clear that I needed re-evaluate my process and how I work with agents. This, too, deserves its own journal entry. The short version is that I switched from using Anthropic models via Copilot to using them with Opencode.

With Opencode ready and eager, I also decided to take a different approach. I knew that I would eventually want a declarative, document-based approach to creating a generative composition, so I created a simple JSON file to use as a guide.

{

"version": "1.0.0",

"title": "Dorian Scale Melody",

"description": "A generative melody using the Dorian mode with probabilistic note selection",

"modules": [

{

"id": "clock",

"type": "clock",

"config": {

"bpm": 120.0,

"time_signature": {

"beats_per_measure": 4,

"beat_unit": 4

}

}

},

{

"id": "melody",

"type": "melody",

"config": {

"root_note": 60,

"mode": "dorian",

"scale_degrees": [0, 1, 2, 3, 4, 5, 6],

"note_weights": [1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0],

"note_duration": 1.0,

"oscillator_type": "sine"

}

},

{

"id": "voice",

"type": "voice",

"config": {

"oscillator_type": "sine"

}

},

{

"id": "dac",

"type": "dac",

"config": {}

}

],

"connections": [

{

"from": "clock",

"to": "melody"

},

{

"from": "melody",

"to": "voice"

},

{

"from": "voice",

"to": "dac"

}

]

}From there, I tasked the agent.

This repo contains the beginnings of library for generative, algorithmic music creation heavily inspired by modular synthesis. The `dorian_melody.rs` example in the examples folder represents the first experiment. It works, but I would like a more declarative approach. The `dorian_melody.json` is an example of a document that I believe is equivalent to the imperative approach. Please update the library and example to work with a more declarative approach. The json file is a first stab at the document format and can be modified. The format should be extensible and eventually support injecting business logic and real-time input. However, these are not yet requirements for a PoC.

It took the Opencode agent (using Sonnet 4.5) eight minutes to work through the request. When it eventually completed, it had done more than I asked. The Dorian example now used my declarative document. It also created to more examples, “Minor Arpeggios” and “Lydian Dream.” This combination of an upgraded agent and new module had proven itself capable.

At this point, I decided I had enough functionality to make some music — or at least make something that sounded musical. It was time to push the document structure until it broke. It didn’t take long.

I updated `dorian_melody.json` to have two melodies, two oscillators, but still one clock. This leads to an error "Module clock has multiple outputs." However multiple outputs should be supported for modules, as should multiple inputs. Please add support. If necessary, feel freed to a "Mixer" node to keep things clean.

A few short minutes later, I had support for multiple outputs from modules and a simple mixer module. With the agent on a roll, I asked for frequency modulation.

Next, add support for FM synthesis and modulation in general. This means it should be possible to connect the output of one oscillator to the frequency input of another. In addition, let's support amplitude modulation as well. This means it should be possible to connect the frequency output from one oscillator to the gain/amplitude input of another. As suggested, we'll need to expand input and edge definitions to support more than one input. Let's used named inputs rather than ordered inputs (objects instead of arrays)

I assumed the model would fail at this, but I was wrong. In retrospect, this was simply because I did not think the problem through deeply. Ultimately, to modulate to signals, you just multiply them. It was reminder of the potential pitfalls of outsourcing research and thought.

After glancing at the implementation — most of which was unnecessarily verbose documentation — I quickly recognized an inelegant solution. Rather than define modulation in terms of signal inputs, it was controlled by setting frequency values to use for modulation on the oscillator module. The goal was to support one module modulating the signal of another. Instead, I had a single module that could perform FM synthesis. This is not what I asked for. I decided to punt on this for the moment and focus on some basics. I had noticed a confusing variety of similar terms used by different modules.

Use more general input names like "frequency", "freq, "amplitude", "gain" etc. That way it is more clear that we can use set gain or frequency.

After this, it was time to merge. The current sprint had dragged on for too long, its scope a little too broad. The goal was to adopt a modular synthesis metaphor. While progress had been made, I couldn’t shake the feeling that I had nudged the library towards something more chaotic. The tools were simultaneously an empowerment and a hinderance, but I cannot blame them. Ultimately, I am responsible for the output. As always, still much more to do.

2026.1.23